The Inference Standoff

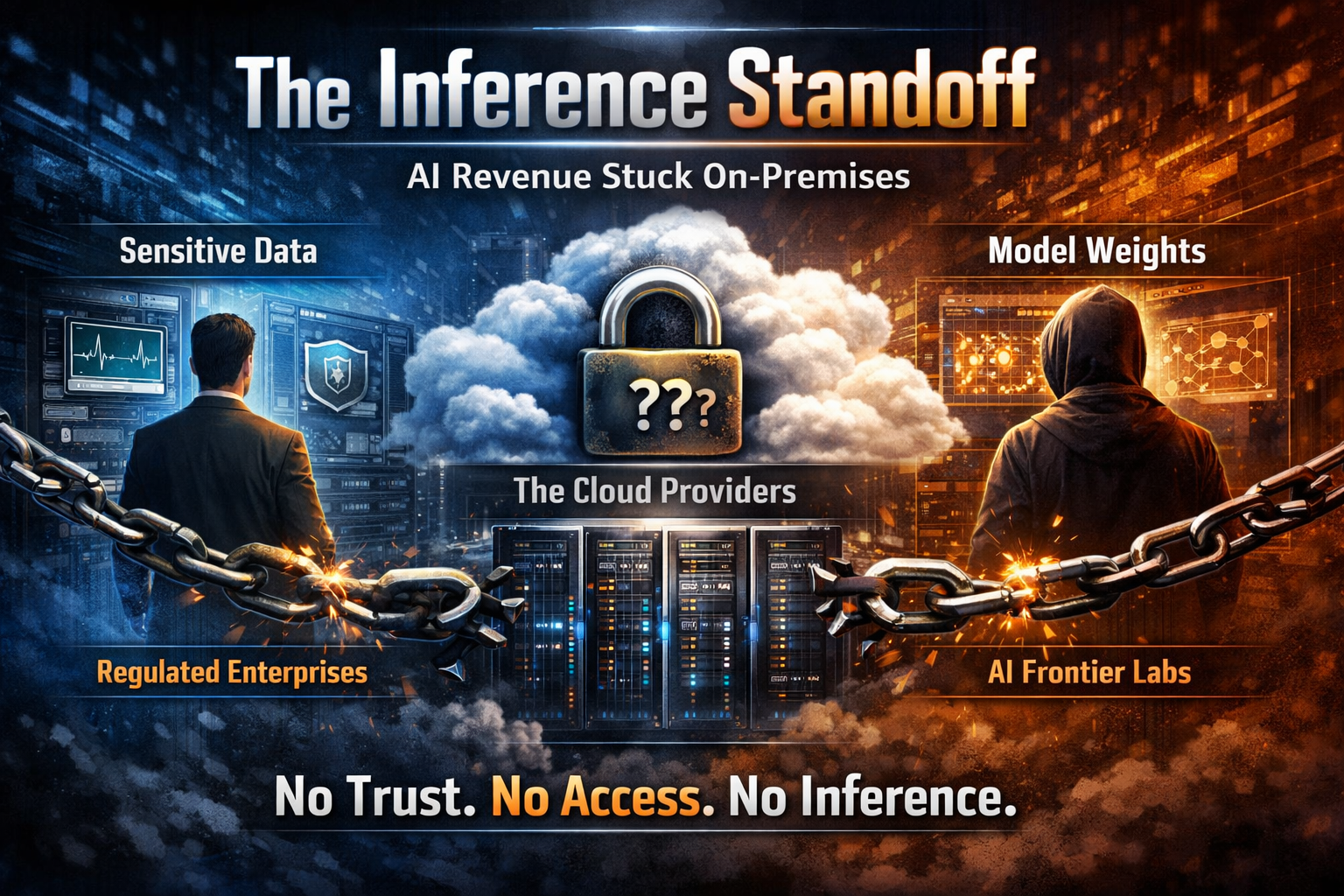

The Inference Standoff

Billions in potential AI revenue is stuck on-prem.

Regulated enterprises won't let sensitive data leave their walls. Frontier labs won't share model weights. Both are required in the same place for inference to run. I call this the inference standoff.

Intelligence on Tap

AI inference is becoming intelligence on tap. Something every company, big or small, wants - or even needs - to plug into. Anthropic just announced 9 billion at the end of 2025. Over 1,000 enterprise customers are each spending more than $1 million a year on Claude alone. OpenAI is not far behind. These are among the fastest-growing companies in history.

But a huge slice of the market can't participate. Hospitals sitting on patient data that could transform diagnostics. Banks with transaction histories that could supercharge fraud detection. Defence contractors, law firms, insurance companies: all sitting on data that frontier models would be extraordinarily useful for, and none of it is going anywhere near a third-party API.

The reason is simple. Every prompt sent to a frontier model can be read in plaintext by the lab and the infrastructure provider. For regulated industries it's a non-starter.

The Other Side of the Table

The labs have their own version of the same problem.

Model weights are the most valuable intellectual property in AI. They represent years of compute, billions in training costs, and the competitive moat that justifies hundred-billion-dollar valuations. Handing weights to an enterprise or a cloud provider means trusting their security posture with your crown jewels. One exfiltration event and the moat is gone.

So the labs hold weights close. Enterprises hold data close. Neither will budge. Inference requires both in the same place, and neither party is willing to go to the other.

The Third Party in the Room

There is a third player standing between the two: the cloud providers. From hyperscalers like AWS and Azure down to the neo-clouds, they want to host the compute layer. They have the GPUs. They have the data centre footprint. They want to run frontier models and serve inference to regulated enterprises.

But the standoff blocks them too. The lab won't send weights to infrastructure it doesn't control. The enterprise won't send data to infrastructure it doesn't control. The cloud provider controls the infrastructure and is trusted by neither.

Three parties. Three trust domains. No overlap.

Why Current Solutions Fall Short

The industry has tried to work around this. None of the workarounds hold up.

Open-source models deployed on-prem. This avoids the weight-sharing problem entirely, but at a steep cost. Open-source models lag frontier models in capability. For enterprises that need the best available intelligence, this is a compromise, not a solution.

Federated learning. The data stays local, but the abstraction is wrong for inference. Federated learning is a training technique. It doesn't solve the problem of running a frontier model against sensitive data in real time.

Homomorphic encryption. Compute on encrypted data without decrypting it. Elegant in theory. In practice, FHE adds orders of magnitude of overhead to inference workloads. It is nowhere near production-ready for LLM-scale computation.

Each of these addresses one side of the standoff while ignoring the other, or introduces performance penalties that make the solution unusable.

Breaking the Deadlock

Confidential computing on Trusted Execution Environments breaks the inference standoff by removing the need for trust entirely.

A TEE is a hardware-isolated enclave on the CPU or GPU. Code and data inside the enclave are encrypted in memory and invisible to the host operating system, the hypervisor, and the infrastructure operator. Not even a root-privileged admin can see what's inside.

Here's what that means for the standoff:

The enterprise sends encrypted data to the TEE. It is decrypted only inside the enclave. The lab's model weights are loaded into the same enclave, also encrypted at rest and decrypted only inside. Inference runs. The result is returned. At no point does the cloud provider, the lab, or anyone else see the enterprise's data or the lab's weights in plaintext.

Neither party has to trust the other. Neither party has to trust the infrastructure. The hardware enforces confidentiality. Attestation, a cryptographic proof generated by the TEE, lets both sides verify that the enclave is running the code it claims to be running, on genuine hardware, before they send anything.

The enterprise's data never leaves its control in any meaningful sense. The lab's weights never leave its control either. The cloud provider gets to host the workload. All three parties get what they want.

What This Unlocks

The inference standoff isn't just a security problem. It's a distribution problem. It limits where frontier models can run, which limits who can buy them, which limits how fast the market grows.

Break the standoff and the frontier labs can deploy to regulated industries without giving up IP. Hospitals, banks, government agencies: all suddenly addressable. The labs extend their market. The enterprises get access to the best models. The cloud providers get to serve the compute.

This is why I believe private inference won't stay a premium feature for long. It will become the baseline. The lab that ships it as a default, not as an add-on, will do what Chrome did to HTTP in 2018: set a new standard that everyone else has to follow.

The standoff has a solution. The question now is who moves first.